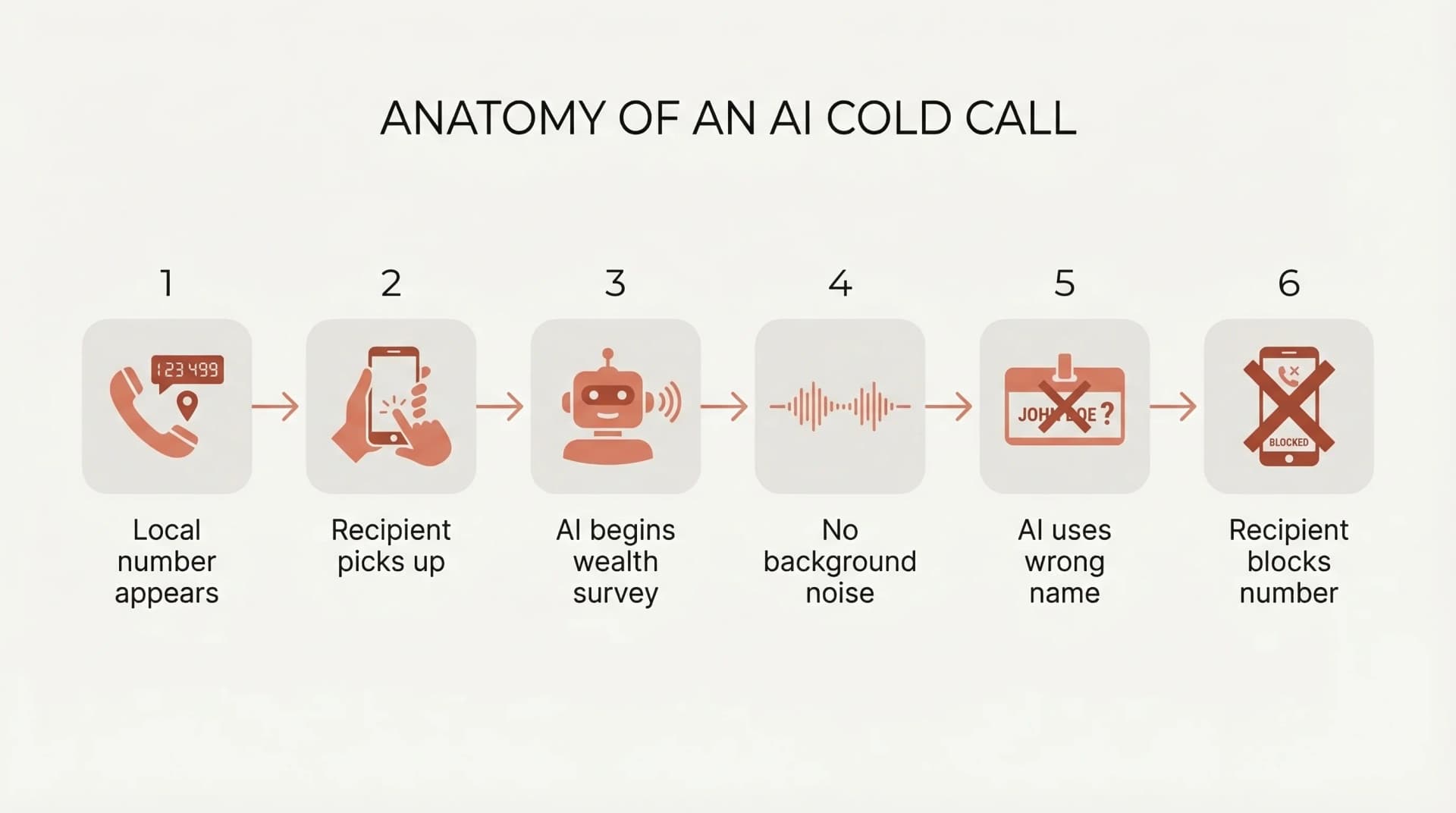

Your phone rings. Local number. You pick up.

A voice introduces itself as Ben. Kiwi accent, polite enough. Ben says he is running a wealth survey across New Zealand households and would like to ask a few questions.

You pause. Something is off. The voice is too smooth. There is no background noise. Not quiet. Dead. When you give your name, Ben calls you Emily.

I build AI voice systems for a living. Inbound call handling for trades businesses, outbound follow-up for compliance workflows, bespoke voice automation for operations teams that need calls made at scale. When I read about NestEdge's AI agent "Ben" making unsolicited cold calls to Kiwis, my reaction was immediate: this is not how you do outbound AI calling. The technology is capable of genuine value. Using it for deceptive cold outreach poisons the well for everyone building it properly.

What happened with NestEdge's AI agent "Ben"?

NestEdge is an Auckland-based company incorporated in late 2024. Their AI telemarketing agent "Ben" placed unsolicited calls to New Zealand households, claiming to conduct a "wealth survey" using local phone numbers so the calls appeared domestic. The voice technology was competent. The deployment was not.

Wellington resident Maggie O'Leary-Noyer described the experience to the NZ Herald: the voice sounded real initially, but there was zero background noise. When she gave her name, the AI called her Emily and did not correct itself.

The public response was predictable and brutal. Reddit threads about NestEdge multiplied so fast that Google results for the company were dominated by people calling their calls scammy. Others urged people to normalise not answering unknown numbers. NestEdge did not respond to the Herald's inquiries.

Why did NestEdge's approach fail so badly?

Unsolicited AI cold calling fails because it stacks deception on top of intrusion. The technology is not the problem. The deployment context is. NestEdge made every wrong choice available to them, and the backlash was proportional.

Where does trust break down in an unsolicited AI call?

Trust in an unsolicited AI call breaks down at the point of realisation. The recipient discovers they are talking to a machine they did not ask to speak with, operating under a pretence they did not agree to. Consumer NZ research shows people already assume unknown local numbers are scams. AI does not fix that deficit. It deepens it.

Phone calls from unknown numbers carry a trust deficit before anyone speaks. NestEdge's approach compounded this at every step. Local numbers to appear domestic. A wealth survey framing that was a pretext for data extraction. A voice designed to pass as human. Each layer eroded trust further.

The fundamental issue is not that AI made the call. It is that nobody asked for the call. An unsolicited call has no prior relationship, no permission, and no value proposition the recipient requested. The AI is sophisticated enough to sound human for a few seconds, but not sophisticated enough to recover when the mask slips. That moment of realisation destroys whatever thin thread of engagement existed.

What does "Ben" reveal about the consent problem?

The most advanced voice AI in the world cannot manufacture consent. If the person on the other end did not ask to be called, and was not expecting the call, the technology is working against both parties. The caller gets blocked. The business gets reported on Reddit. The entire AI voice industry gets tarred with the same brush as phone scammers.

Using local phone numbers to bypass call screening is a deliberate design choice. So is the "wealth survey" framing. These are not bugs. They are features of a system designed to extract attention from people who did not volunteer it.

One Reddit comment about NestEdge captured the wider concern: "Our elderly population don't stand a chance." This is not abstract. Older New Zealanders are disproportionately vulnerable to convincing voice interactions they did not initiate. When AI voice is deployed without consent, the people least equipped to recognise it are the most affected.

Is AI cold calling even legal in New Zealand?

AI cold calling occupies a regulatory grey area in New Zealand. The Fair Trading Act 1986 prohibits misleading conduct, but the Unsolicited Electronic Messages Act 2007 covers texts and emails, not phone calls. Expect that gap to close as incidents like NestEdge accumulate.

The Commerce Commission told the Herald it had recorded 14 reported concerns related to AI chatbots or AI agents in the past year. General Manager Vanessa Horne confirmed that using AI for business automation is legal, but was clear on the boundary: businesses cannot create fake or misleading content, including misrepresenting their location.

The Fair Trading Act's prohibition on misleading conduct in trade is broad. Using local phone numbers to create the impression of a domestic caller when the call is unsolicited and AI-generated sits in contested territory. Having an AI present as human without disclosure raises further questions under the same Act.

Here is the gap that matters: voice-based telemarketing, whether human or AI, operates in less regulated space than a marketing email. That asymmetry will not survive many more NestEdge incidents. Australia's ACMA has already moved to tighten rules on AI-generated communications. The EU AI Act requires disclosure when a person is interacting with an AI. New Zealand typically follows both jurisdictions on consumer protection.

This is not legal advice. But the direction of travel is clear.

What is the actual line between deceptive cold calling and legitimate outbound AI?

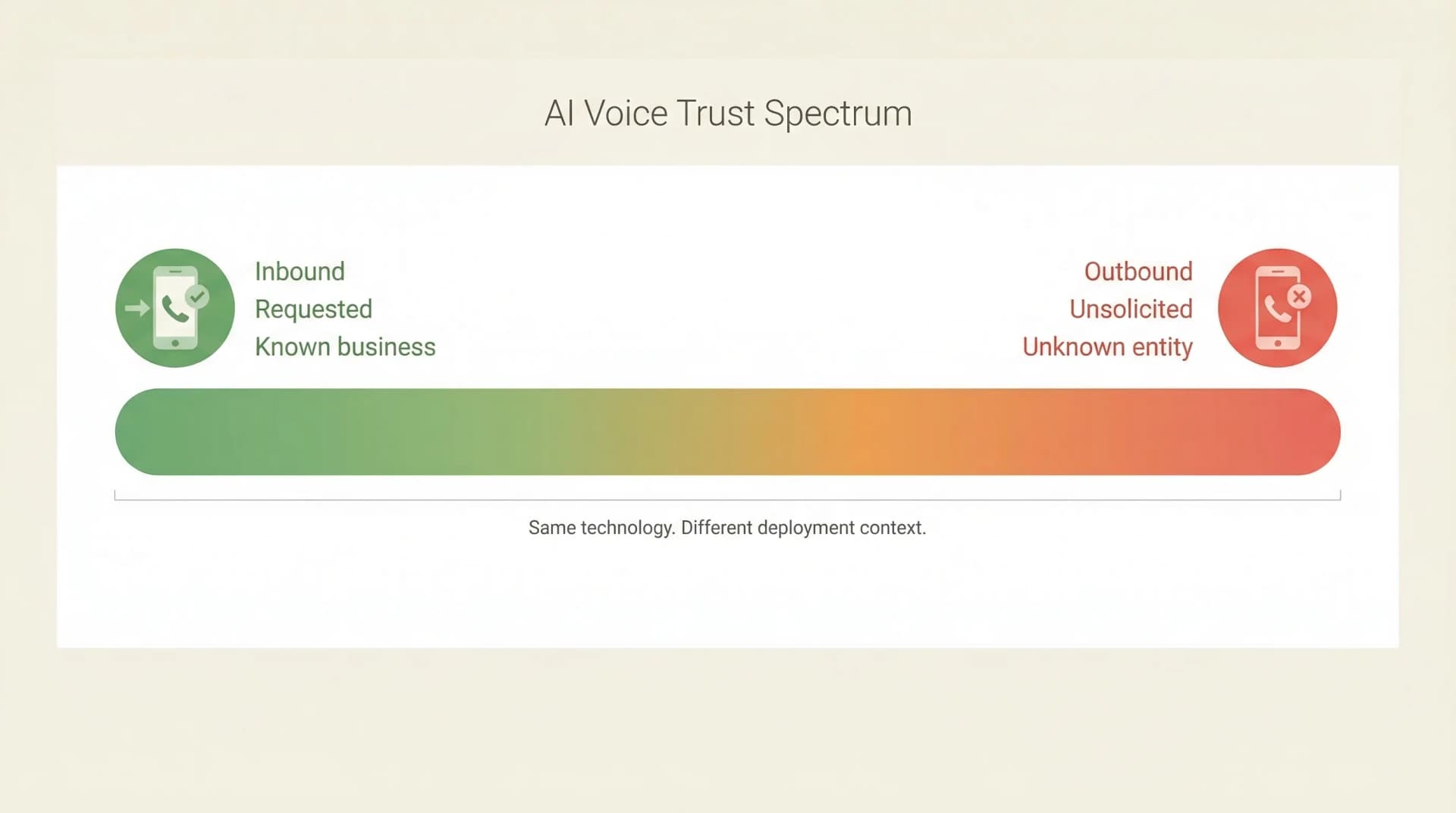

The line is not inbound versus outbound. It is consent versus no consent. This distinction matters because outbound AI voice has genuine, high-value applications that look nothing like what NestEdge built. Collapsing all outbound calling into the same category as unsolicited cold outreach is intellectually lazy and commercially wrong.

I held a harder line on this in the original version of this post. I said inbound only, outbound never. I was wrong, or at least incomplete. Since then I have built outbound AI voice systems for clients where the call is expected, the recipient has a prior relationship with the business, and the AI is doing work that genuinely serves both parties. The results were different from NestEdge in every way that matters.

What does consent-based outbound AI actually look like?

Consent-based outbound AI calls people who have a prior relationship with the business, who expect to be contacted, and who benefit from the call being made. The AI is disclosed, the purpose is clear, and the opt-out is immediate. This is not cold calling. It is operational automation.

Consider a concrete example. A health and safety compliance firm needs to follow up with 200 subcontractors whose safety documentation has expired. The subcontractors signed up to the compliance programme. They know they will be contacted when paperwork lapses. They need the reminder to keep working on site. The alternative is a human staff member spending three days working through a call list, leaving voicemails, playing phone tag.

An AI voice agent handles this in hours. It calls each subcontractor, identifies itself as an AI calling on behalf of the compliance firm, explains that their documentation has expired, and offers to help them resolve it. The subcontractor was expecting this kind of follow-up. The call is not an intrusion. It is a service.

That is a real use case I have built. The distance between this and NestEdge's "wealth survey" cold calls is not a matter of degree. It is a matter of kind.

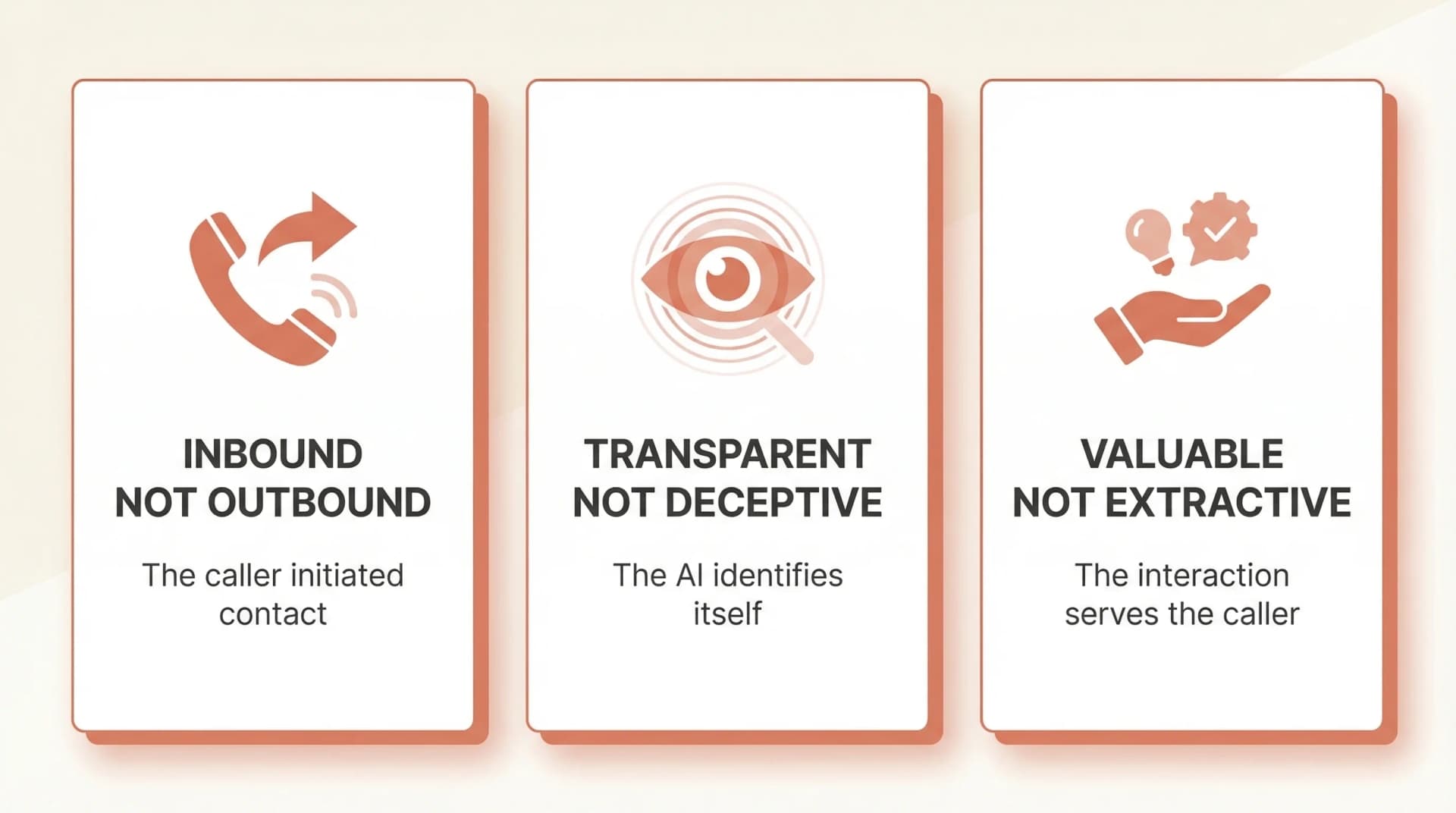

What are the three conditions for ethical outbound AI voice?

Three conditions separate legitimate outbound AI from the kind that gets you reported on Reddit. All three must be met simultaneously. Missing any one of them puts you in NestEdge territory.

Consent exists. The recipient has a prior relationship with the business and has either explicitly opted into receiving calls or is being contacted as part of a service they signed up for. This is not a technicality. It is the foundation. A subcontractor who joined a compliance programme consented to compliance follow-up. A stranger who picked up a spoofed local number did not consent to a wealth survey.

The AI is disclosed. The voice identifies itself as an AI system or, at minimum, does not actively pretend to be human. This is both an ethical baseline and increasingly a legal one. Some recipients will be surprised. Very few will object, because the call is about something they care about. Disclosure filters for exactly the kind of trust that makes the interaction productive.

The value flows to the recipient. The call exists to help the person on the other end accomplish something: renew their documentation, confirm an appointment, receive a service update they need. If the only beneficiary of the call is the business making it, the value equation is backwards. NestEdge's calls extracted value from recipients. Ethical outbound delivers it.

How does inbound AI voice fit alongside outbound?

Inbound AI voice remains the highest-trust, lowest-risk deployment of the technology. The caller initiated the interaction. They want to reach the business. The AI is answering a call that would otherwise go to voicemail. This is service, not intrusion.

This is the design principle behind CallCover, the voice AI product I built for NZ trades businesses. A plumber is under a house. A sparky is up a ladder. The phone rings. CallCover answers, takes the message, books the job. The caller is grateful their call was answered. Nobody was interrupted or deceived.

The same principle applies at larger scale. In the Brenda Currie case study, tenants call an AI system when they have a maintenance emergency after hours. Inbound. Requested. Helpful.

For most New Zealand SMEs, inbound AI voice is still the right starting point. It solves an immediate, painful problem: missed calls. It requires no change to how customers find you. It works inside existing trust relationships. A phased approach to AI integration that starts with inbound call handling and then expands to outbound follow-up as the business matures is almost always the right sequence.

Outbound comes later, and it comes with conditions. The businesses that earn the right to deploy outbound AI are the ones that have built trust through inbound first.

What should NZ businesses learn from the NestEdge backlash?

The backlash against NestEdge is not anti-AI sentiment. It is anti-deception sentiment. People are not angry that AI can make phone calls. They are angry that AI was used to trick them into conversations they did not want to have. The lesson is precise and actionable.

Deploy AI where consent exists. Inbound calls have implicit consent. The person called you. Outbound calls require explicit consent: a prior relationship, an expectation of contact, a clear reason for the call. If neither exists, do not make the call.

Disclose. Always. The Commerce Commission's position on misleading conduct is not ambiguous. Disclosure builds the trust that makes the technology effective. People accept AI when they know what it is and it helps them. They reject it when they feel deceived.

Start inbound, expand to outbound. Inbound solves an immediate problem with minimal risk. Outbound requires more infrastructure, more compliance thinking, and more operational maturity. Get the first right before attempting the second.

Check who benefits. If the only beneficiary of an AI call is the business making it, the value equation is wrong. The NestEdge calls served NestEdge. The compliance follow-up calls I have built serve the subcontractor who needs to get their paperwork renewed so they can keep working. That difference is everything.

The social media reaction to NestEdge should be read as a preview of what happens to any brand that deploys AI voice without consent. Their Google results are now dominated by Reddit threads. The reputational cost dwarfs any short-term gain from unsolicited outreach.

If you are choosing an AI consultant to help integrate voice AI into your business, ask them where they sit on consent. If their pitch starts with unsolicited cold calling, find someone else. If they can articulate the difference between deceptive outreach and consent-based outbound, you are talking to someone who understands the technology and the market it operates in.

Will regulations catch up to AI voice in NZ?

New Zealand's regulatory framework for AI voice calls will tighten. The only question is timing. Businesses that build ethical practices now will not need to scramble when the rules arrive. Disclosure, consent, and value-first design are not costly changes. They are baseline operational choices.

Australia's ACMA has already moved to restrict AI-generated communications. The EU AI Act classifies AI systems that interact with humans as requiring transparency, with strict disclosure obligations. Both jurisdictions now mandate that people be told when they are interacting with an AI.

New Zealand follows both on consumer protection. The 14 complaints the Commerce Commission recorded in a single year, combined with the public visibility of the NestEdge incident, create exactly the kind of pressure that accelerates regulatory attention. If you are wondering where the current legal lines sit for AI receptionists in New Zealand, I have written about that separately.

The businesses that get ahead of regulation rarely regret it. The ones that get dragged there usually do.

Ben is still out there. Still calling Kiwis from local numbers. Still asking about wealth surveys. Still getting blocked.

That is one version of AI voice. There is another: AI that answers when you call a business, and AI that calls you when you need to hear from one. Same technology. The difference is consent.

I build both kinds, and the line between them is not negotiable. If you want to understand how AI voice fits your business, whether inbound, outbound, or both, get in touch.

Frequently asked questions

- Is it legal to use AI for cold calling in New Zealand?

AI for business automation is legal in New Zealand, but the Fair Trading Act 1986 prohibits misleading conduct in trade. Using AI to impersonate a human without disclosure, or using local phone numbers to create a false impression of origin, may breach these provisions. The Commerce Commission has recorded 14 reported concerns about AI agents in the past year.

- What is the difference between AI cold calling and consent-based outbound AI?

AI cold calling contacts people with no prior relationship and no expectation of the call, often using deceptive tactics like local number spoofing. Consent-based outbound AI contacts people who have a prior relationship with the business, expect to be contacted, and benefit from the call. The technology is identical. The deployment context is everything.

- Does EmbedAI build outbound AI calling systems?

Yes, as bespoke consulting engagements. EmbedAI builds outbound AI voice systems for businesses where the recipient has a prior relationship, the call is expected, and the AI is disclosed. Use cases include compliance follow-up, appointment reminders, and service notifications. EmbedAI does not build unsolicited cold calling systems.

- How can NZ businesses use AI voice ethically?

Three conditions must be met: the recipient has given consent or has a prior relationship, the AI identifies itself or does not pretend to be human, and the call delivers value to the recipient rather than extracting it. Businesses should start with inbound AI voice and expand to outbound only with appropriate consent infrastructure.

- What is CallCover and does it handle outbound calls?

CallCover is EmbedAI's AI phone answering product for New Zealand trades businesses. It handles inbound calls when staff are unavailable, taking messages, booking appointments, and answering enquiries. CallCover is an inbound product. Outbound AI voice is handled through EmbedAI's bespoke consulting services.