Three years before Smart Assess went live, we built a crisis-response platform that kept insurance device claims moving through New Zealand's COVID-19 lockdown. That platform asked policyholders to photograph their damaged smartphones and submit them for remote human assessment. It worked. It processed 80 to 100 claims per day when the alternative was zero.

But it also did something we did not plan for. It generated a dataset.

Every photo submitted through the virtual assessment system was reviewed by an authorised technician who recorded the damage type, severity, and repair-or-replace determination. Over three years and more than 216,000 assessments, we accumulated a richly categorised library of real-world device damage. Not stock photos. Not lab-controlled images. Actual cracked screens, water-damaged internals, and shattered housings photographed by stressed policyholders on their kitchen tables.

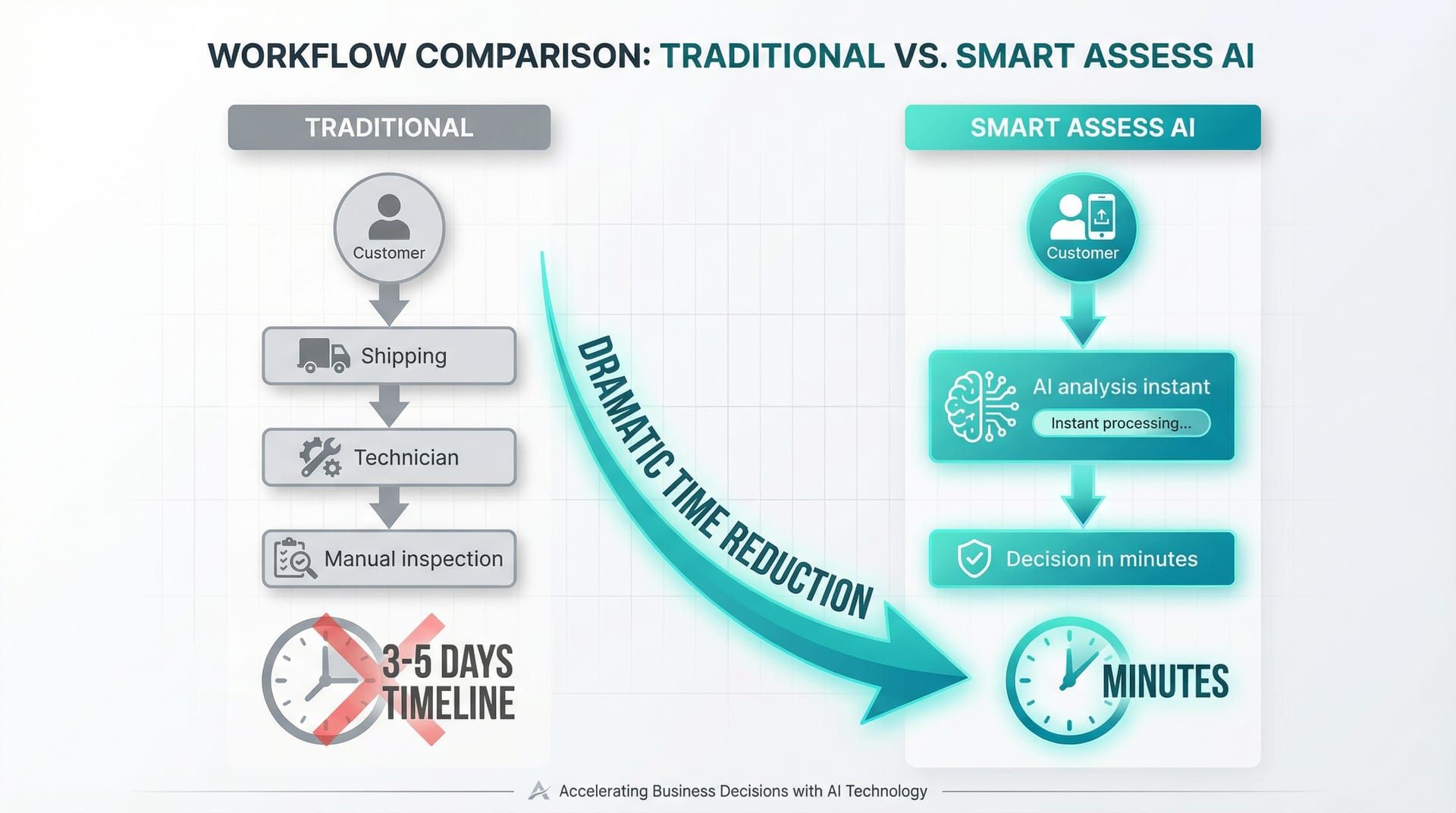

Smart Assess is what happened when we pointed computer vision at that dataset. An AI-powered damage assessment system for insurance claims that reduces smartphone claim settlement determination from 3-5 days to minutes, with 95%+ accuracy validated by human technicians on every single claim.

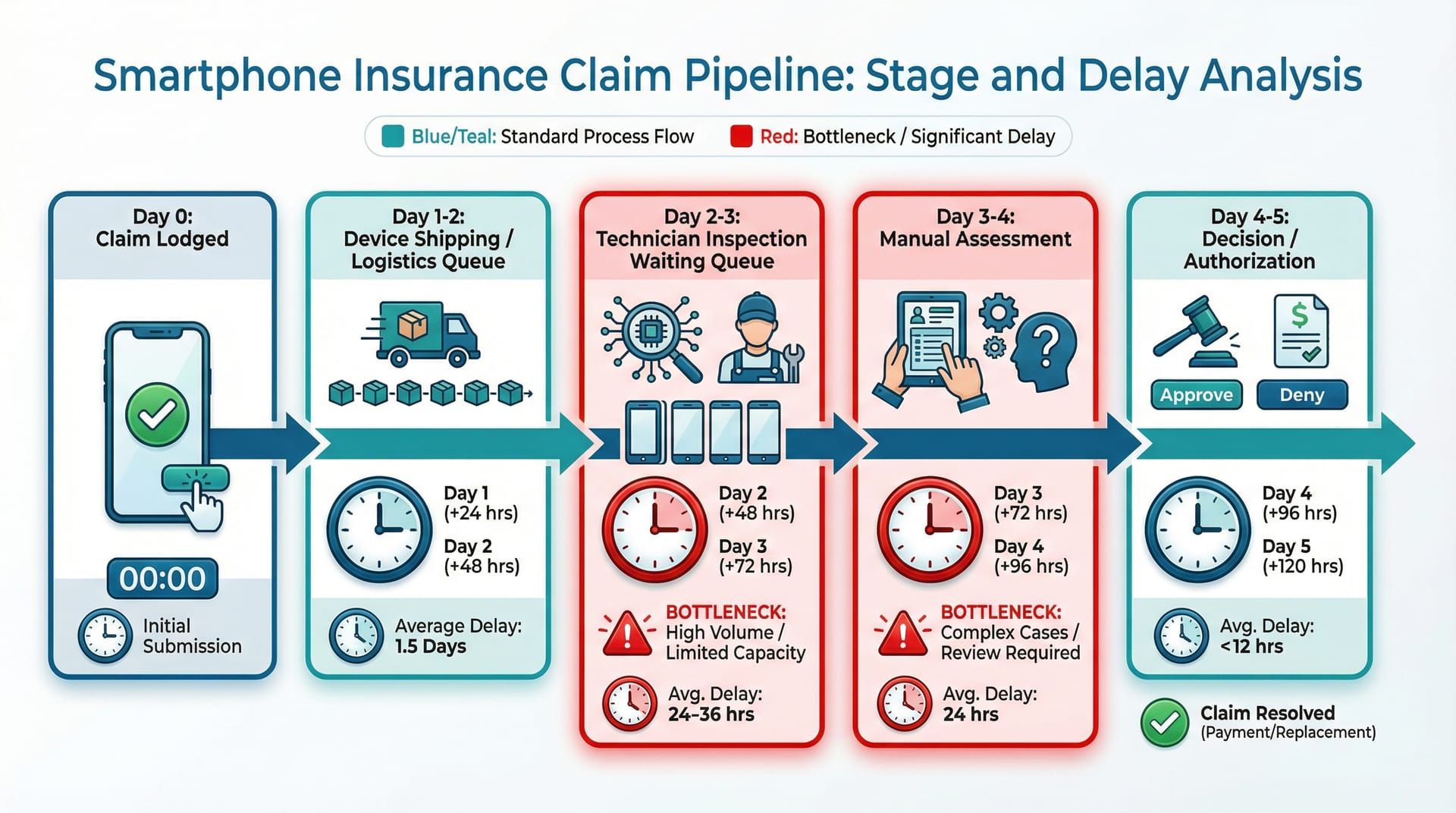

Why does a smartphone insurance claim take 3-5 days to settle?

Smartphone insurance claims in New Zealand traditionally require physical device inspection by authorised technicians, creating a multi-day pipeline of logistics, scheduling, and manual assessment that delays settlement by 3-5 days even for straightforward damage like cracked screens. Computer vision AI eliminates the assessment bottleneck by classifying damage from photographs in seconds rather than days.

The settlement delay is not caused by complexity. The vast majority of smartphone claims are simple. A cracked screen. A shattered back panel. Water damage with visible corrosion. An experienced technician can look at most damaged smartphones and make a repair-or-replace determination in under two minutes. The 3-5 days is not thinking time. It is logistics time.

Here is what that pipeline actually looks like. The policyholder lodges a claim with their insurer. The insurer authorises an assessment and notifies the assessment provider. The assessment provider contacts the policyholder to arrange device drop-off or courier collection. The device travels to an assessment centre. It sits in a queue. A technician picks it up, inspects it, documents the damage, and records a determination. The result feeds back to the insurer for settlement authorisation.

Each step in the traditional assessment pipeline adds hours or days. The actual technical assessment takes minutes.

Each step in the traditional assessment pipeline adds hours or days. The actual technical assessment takes minutes.

Each step in that chain adds time. Most of that time is waiting. Waiting for a courier. Waiting in a queue. Waiting for the technician's availability. The policyholder, meanwhile, has a broken phone. If it is their work phone, they are losing business. If it is their only phone, they are unreachable.

For the insurers we worked with (IAG, Vero, and Tower), the compound cost went beyond policyholder frustration. Every day a claim sat in the pipeline was a day of operational overhead: tracking, status updates, customer service calls asking "where is my claim?", and the administrative weight of thousands of open cases at any given time. A three-day average claim life across tens of thousands of annual claims means a permanent backlog of work-in-progress that never clears.

The human technician doing the assessment was never the bottleneck. Their expertise was being wasted on logistics rather than judgement. We needed to separate the assessment decision from the physical handling of the device, and the COVID virtual assessment platform had already proven that photographs could carry enough information for that decision. What Smart Assess added was the ability to make that decision without a human looking at every photo.

Smart Assess removes the logistics chain entirely for straightforward claims, reducing the assessment step from days to minutes.

Smart Assess removes the logistics chain entirely for straightforward claims, reducing the assessment step from days to minutes.

How does computer vision turn a smartphone photo into a claims decision?

Smart Assess uses a machine learning model trained on 216,000+ categorised device damage photographs to automatically detect damage type, quantify severity, and generate repair-or-replace recommendations from customer-submitted images. The model achieves 95%+ accuracy, validated through a human-in-the-loop system where every AI assessment is confirmed by an authorised technician.

The core principle is the same one that made the virtual assessment platform work: you do not always need to hold a device to know what is wrong with it. A photograph of a cracked screen carries the same information whether a human or a machine learning model analyses it. The difference is speed and consistency.

We started from the outcome and worked backward. The outcome we needed was a confidence-scored damage classification that could feed directly into the existing claims workflow, triggering either a repair authorisation or a replacement dispatch without human intervention for clear-cut cases. Working backward from that, we needed three things: a model that could classify damage accurately, a training dataset that reflected real-world conditions, and a validation system that maintained trust.

How did 216,000 virtual assessments become training data?

Over three years of COVID-initiated virtual device assessments for IAG, Vero, and Tower, every customer-submitted photo was paired with an authorised technician's damage classification and repair-or-replace determination. This produced 216,000+ expert-labelled images of real-world device damage, creating a training dataset that no amount of stock photography or lab simulation could replicate.

The training dataset was the single most valuable asset in this project, and we did not set out to create it. When we built the virtual assessment platform in March 2020, the immediate goal was business continuity. Policyholders submitted photos. Technicians assessed them. Determinations were recorded. The system was designed to process claims, not to train a future AI model.

But every assessment generated a labelled data point. A set of photographs paired with a technician's damage classification and repair-or-replace determination. Over three years of continuous operation across IAG, Vero, and Tower's device claims portfolios, that dataset grew to more than 216,000 categorised damage images.

This is not the kind of dataset you can buy or synthesise. Stock photo libraries of "cracked phone screens" are useless for training a production model because they do not reflect reality. Real claim photos are taken by non-technical people in poor lighting on kitchen tables. They include fingers in frame, reflections, odd angles, and varying image quality. A model trained on clean lab photos would fail on real submissions. Ours was trained on the exact kind of messy, imperfect images it would encounter in production.

Training data from real claims submissions includes the full range of quality, lighting, and angles that a production model must handle.

Training data from real claims submissions includes the full range of quality, lighting, and angles that a production model must handle.

The categorisation came from authorised technicians, people with years of hands-on device repair experience. Their classifications were not academic labels. They were operational decisions that had downstream consequences: repair authorisations, parts orders, replacement dispatches. This meant the training labels carried the weight of real expertise rather than crowd-sourced annotation.

What does the model actually classify?

The Smart Assess computer vision model performs three distinct tasks on each submitted image set: damage type identification (screen crack, LCD failure, housing damage, water ingress, or cosmetic damage), severity quantification mapped to repair-or-replace thresholds, and a confidence-scored recommendation that routes directly into the insurer's claims workflow.

These three tasks mirror what a human technician does when they pick up a damaged device. They look at the damage and categorise it. They judge how bad it is. They decide whether to repair or replace. The difference is that the model does all three in seconds, and it does them consistently. The fifteenth cracked screen of the day gets the same attention as the first.

Claims where the model determined the device was not repairable through virtual assessment were routed directly to the retail provider for replacement authorisation. No queuing. No waiting. The policyholder received a replacement determination in minutes rather than days. For claims that fell into the repairable category or where the model's confidence was below threshold, the system flagged them for human review with the AI's preliminary classification already attached.

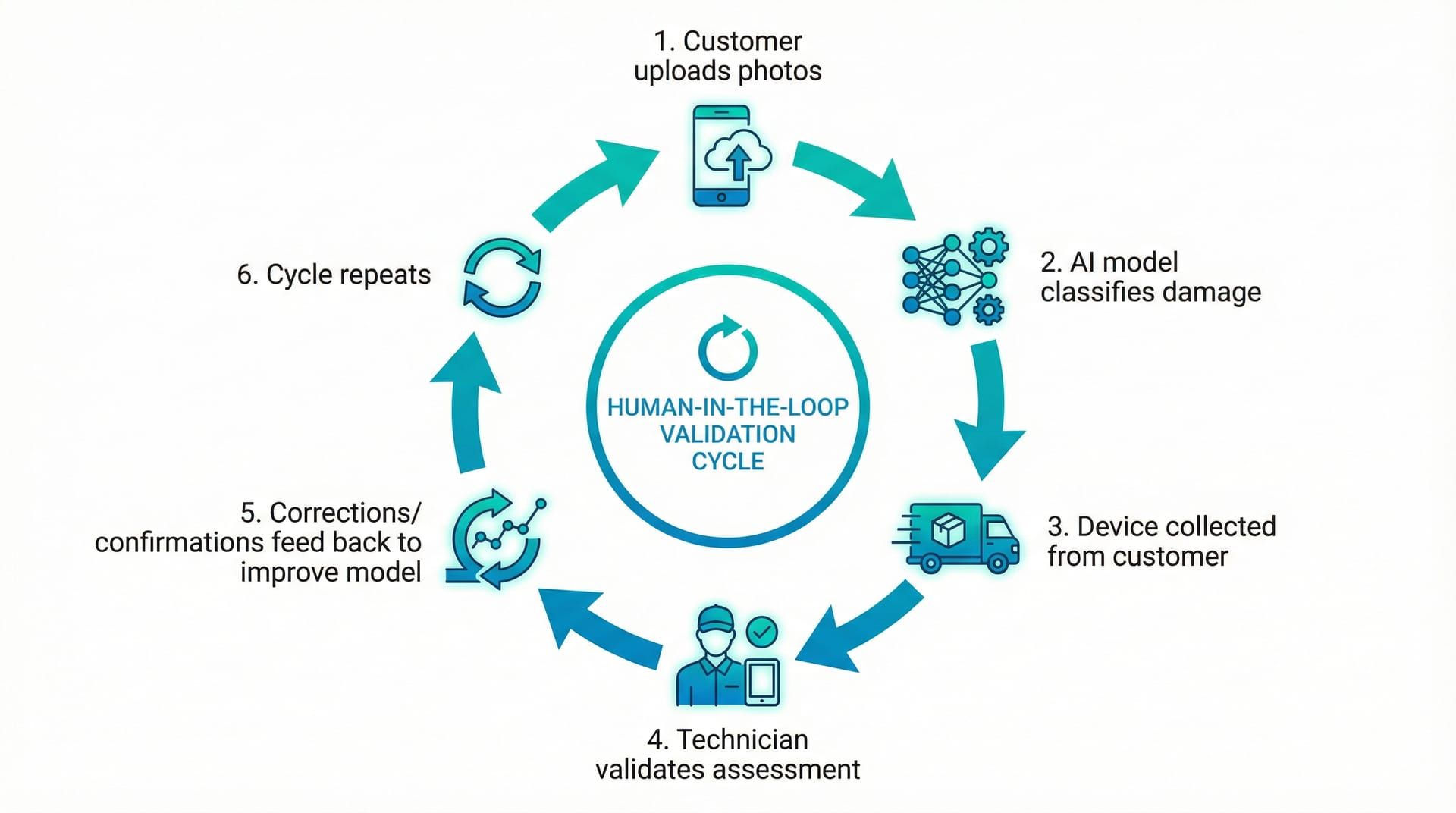

How does the human-in-the-loop validation work?

Smart Assess uses a dual-purpose validation system where every AI-assessed device is physically collected and inspected by an authorised human technician. The technician confirms or corrects the AI's determination, maintaining insurer-grade quality assurance while simultaneously generating corrective training data that improves the model's accuracy with every assessment.

Every device assessed through Smart Assess still had a physical touchpoint. This was not optional. The key shift was in the technician's role. They were no longer the primary assessor starting from a blank slate. They were a validator working with the AI's preliminary classification already in hand. This changed the nature of the work. Instead of "what is wrong with this device?", the question became "did the AI get it right?"

This dual-purpose validation system served two functions simultaneously. First, it maintained the quality assurance that insurers require. No claim was settled purely on an AI's say-so. Every determination had human confirmation. Second, every validation was a training signal. When a technician confirmed the AI's assessment, that was positive reinforcement. When they corrected it, that was a labelled correction that refined the model's accuracy for similar cases going forward.

The validation loop creates a self-improving system where every human correction makes the next AI assessment more accurate.

The validation loop creates a self-improving system where every human correction makes the next AI assessment more accurate.

The model improved continuously. Each batch of validated assessments was fed back into the training pipeline, refining the model's ability to handle edge cases and ambiguous damage. The 95%+ accuracy figure was not a launch metric. It was a moving target that improved over time as the validation loop accumulated more confirmed assessments.

This architecture also served as a trust mechanism for the insurance partners. AI making autonomous claims decisions is a hard sell in a regulated industry. AI making recommendations that are always validated by a qualified human is a much easier conversation. The human-in-the-loop was not a crutch. It was a feature that made adoption possible.

What happened when Smart Assess went live?

When Smart Assess was deployed for IAG, Vero, and Tower's smartphone claims portfolios, it reduced claim settlement determination time from 3-5 days to minutes for straightforward damage cases, while maintaining 95%+ accuracy validated against human technician assessments. The system processed claims faster than physical assessment had ever achieved, and every AI determination was confirmed by an authorised human assessor.

The first thing that changed was the claim lifecycle. A policyholder with a clearly damaged device, a smashed screen with no ambiguity, went from a multi-day wait to a same-day determination. Photos submitted in the morning could have a replacement authorisation before lunch. For claims identified as non-repairable, the determination went straight to the retail provider without the device ever entering a physical assessment queue.

The second thing that changed was technician utilisation. Instead of spending their expertise on straightforward cases that any experienced assessor could determine in seconds, technicians focused on the cases that genuinely required human judgement: ambiguous damage, multi-component failures, devices with potential fraud indicators. The AI handled the volume. Humans handled the complexity.

Daily throughput and accuracy metrics gave insurers real-time visibility into the system's performance.

Daily throughput and accuracy metrics gave insurers real-time visibility into the system's performance.

The third change was the one that mattered most to the insurers' bottom line. Operational cost per claim dropped because the logistics chain shortened. Fewer devices needed physical collection for initial assessment. Fewer customer service calls came in asking about claim status, because claims resolved faster. The permanent backlog of work-in-progress shrank because claims cleared the pipeline in hours rather than days.

The evolution from crisis response to permanent AI solution took three years and followed a path we could not have planned. The COVID virtual assessment platform was built in two weeks to solve an immediate problem. Smart Assess was built over months to solve a structural one. But the training data that made Smart Assess possible only existed because the crisis platform had been running continuously for three years, generating labelled data with every assessment.

This is a pattern we see repeatedly in our consulting work. The best AI solutions are not the ones conceived in a boardroom and built from scratch. They are the ones that emerge from operational data, trained on the decisions your experts have already been making, and deployed to make those decisions faster and more consistently. It is also why our three-phase delivery model starts with Discovery: you need to understand what data your business already generates before you can build anything meaningful with it.

What technology powers Smart Assess?

Computer Vision (ML) — Core damage classification model trained on 216,000+ real-world device damage images, performing damage type detection, severity quantification, and repair-or-replace recommendation with confidence scoring.

Image Processing Pipeline — Intake and normalisation of customer-submitted photographs, handling variable quality, resolution, lighting, and orientation to produce consistent inputs for the classification model.

Virtual Assessment Platform — Customer-facing photo submission interface inherited from the COVID-19 response build, with guided capture flow for structured image collection.

Technician Validation Interface — Assessment review dashboard where authorised technicians confirm or correct AI determinations, with corrections feeding back into model retraining.

Claims Integration Layer — API bridge connecting Smart Assess determinations to existing insurer claims management systems, routing non-repairable devices directly to retail providers for replacement authorisation.

Training Pipeline — Continuous model refinement system ingesting validated assessment pairs (AI prediction + technician confirmation/correction) to improve classification accuracy over time.

FileMaker Pro (ERP) — Existing job management system managing claim records, technician assignments, device tracking, and integration with insurer portals for IAG, Vero, and Tower.

FAQ

Can computer vision AI accurately assess smartphone damage from photos?

Yes. Our Smart Assess system achieves 95%+ accuracy in classifying smartphone damage from customer-submitted photographs. The model was trained on over 216,000 categorised real-world damage images and is validated by authorised human technicians on every claim. Accuracy improves continuously as the human-in-the-loop validation feeds corrections back into the training pipeline.

How does AI reduce insurance claim settlement time in New Zealand?

AI damage assessment eliminates the logistics bottleneck in traditional claims processing. Instead of collecting a device, queuing it for inspection, and waiting for a technician's availability, AI classifies damage from submitted photos in seconds. For NZ insurers like IAG, Vero, and Tower, Smart Assess reduced smartphone claim determination from 3-5 days to minutes for straightforward cases.

What training data does an AI damage assessment model need?

The most effective training data comes from real operational assessments, not stock photos or lab-controlled images. Smart Assess was trained on 216,000+ photographs from actual insurance claims, taken by real customers in uncontrolled conditions. Each image was categorised by an authorised technician, providing expert-quality labels that reflect genuine assessment decisions rather than academic annotations.

Does AI replace human assessors in insurance claims?

No. Smart Assess uses a human-in-the-loop model where AI makes the initial damage classification and every determination is validated by an authorised human technician. The AI handles speed and consistency for high-volume straightforward claims. Human expertise focuses on complex, ambiguous, or borderline cases. This validation also continuously improves the AI model through corrective feedback.

Does EmbedAI build AI solutions for NZ insurance companies?

Yes. EmbedAI has delivered computer vision, claims automation, and operational AI systems for New Zealand's largest insurers including IAG, Vero, and Tower. Our insurance and property industry practice draws on years of hands-on experience in claims processing, device assessment, and insurer integration. Contact us to discuss your claims automation requirements.